FIELD DISPATCH - SUBCUTANEOUS FRONT, SECTOR 7-BRAVO

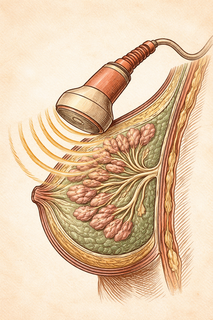

Reporting live from somewhere between the pectoralis major and the skin surface, where an ultrasound probe just made contact. The terrain is dense, echogenic, and - frankly - confusing. Radiologists have been squinting at these grayscale landscapes for decades, trying to distinguish friend from foe in a sea of shadowy blobs. Reinforcements have arrived. They're artificial, they're intelligent, and they were trained on 3.5 million images. This is not a drill.

The Reinforcements Have a Name: BUSGen

A team of researchers just dropped what might be the most overprepared AI system in breast imaging history. BUSGen - short for Breast Ultrasound Generative model - is a foundation generative model that learned breast anatomy the way some of us learned Netflix categories: by consuming an almost incomprehensible volume of content. Over 3.5 million breast ultrasound images, to be precise (Yu et al., 2026).

But here's where it gets genuinely interesting. BUSGen doesn't just look at ultrasound images. It makes them. Realistic, clinically useful synthetic breast ultrasound images that can then be used to train other AI models for screening, diagnosis, and prognosis. It's an AI that builds training camps for other AIs. Somewhere, a philosopher is having a crisis.

Why Ultrasound Needs a Lifeline

Breast ultrasound is the unsung workhorse of cancer detection, particularly for women with dense breast tissue - which, incidentally, describes over 50% of women in some populations. Unlike mammography, ultrasound doesn't involve radiation and can catch tumors that mammograms miss in dense tissue. The catch? Interpreting ultrasound images is notoriously subjective. Hand a scan to five radiologists and you might get five different opinions and one existential argument.

The bigger problem is data scarcity. Training robust AI models for medical imaging requires enormous, well-labeled datasets - the kind that are expensive, time-consuming, and wrapped in enough privacy regulations to make a lawyer weep. Rare pathological cases are, by definition, rare. You can't just order more cancer samples from a catalog.

The Synthetic Data Play

BUSGen's party trick is "few-shot adaptation." Give it a handful of real examples of a specific clinical scenario, and it generates entire repositories of synthetic images that are statistically faithful to the real thing. These fake-but-not-fake images can then train downstream AI models for specific tasks without ever exposing actual patient data.

This is less "deepfake" and more "really good study guide." The synthetic images maintain the pathological features and clinical variation of real scans while being completely de-identified. Patient privacy advocates, you may exhale.

The concept of using generative AI for synthetic medical data has been gaining traction across radiology (Generating Synthetic Data for Medical Imaging, Radiology, 2024), but BUSGen represents arguably the most ambitious application in ultrasound to date. Foundation models in radiology are increasingly recognized for their potential to bridge data gaps while addressing privacy constraints (Foundation Models in Radiology, Radiology, 2024).

Beating the Humans (By a Lot)

Here's the number that will make radiologists do a double-take: models built with BUSGen's synthetic data outperformed all nine board-certified radiologists tested in early breast cancer diagnosis, with an average sensitivity improvement of 16.5% (P < 0.0001).

Let that marinate. Not "comparable to." Not "approaching." Exceeded. Every. Single. One.

This isn't entirely without precedent - AI systems have been creeping up on radiologist-level performance in breast imaging for a few years now. A recent multi-center study found AI diagnostic systems achieving an AUC of 0.948 for breast ultrasound, matching radiologists with a decade of experience and outperforming less experienced practitioners (Evaluation of AI diagnostic systems for breast ultrasound, Japanese Journal of Radiology, 2025). But BUSGen's edge is that it achieved this using synthetic training data, which means the approach could theoretically be scaled anywhere, even in resource-limited settings with small local datasets.

The Scaling Effect (Or: More Fake Data = Better Real Results)

The researchers also characterized something they call the "scaling effect" of synthetic data - basically, how much better do things get as you feed the downstream models more and more generated images? The answer appears to be: meaningfully better, with diminishing returns at the extreme end, which tracks with what we see in other domains. It's a useful finding because it suggests there's a practical sweet spot between "not enough training data" and "we generated so many fake ultrasounds that our servers are crying."

What This Actually Means

BUSGen isn't replacing your radiologist. (Your radiologist can relax. Slightly.) What it is doing is solving one of medical AI's most stubborn bottlenecks: the data problem. By generating high-quality synthetic data that respects patient privacy, it could democratize access to advanced AI-assisted breast cancer detection - particularly in healthcare systems that lack the massive annotated datasets typically hoarded by well-funded academic centers.

The model also opens the door to secure data sharing between institutions. Instead of shipping around actual patient scans with all the regulatory headaches that entails, hospitals could share synthetic datasets that capture the same clinical patterns. It's privacy-preserving collaboration, which is about as close to a win-win as healthcare IT ever gets.

Breast cancer remains the most commonly diagnosed cancer worldwide. Anything that makes early detection faster, more accurate, and more accessible isn't just interesting science - it's the kind of work that changes the math on survival rates.

References:

-

Yu, H., Li, Y., Zhang, N., et al. A foundation generative model for breast ultrasound image analysis. Nature Biomedical Engineering (2026). DOI: 10.1038/s41551-026-01639-1. PMID: 41946926

-

Shin, H.J., Han, K., Ryu, L., & Kim, E.K. The evidence and concerns about screening ultrasound for breast cancer. Cancer Biology & Medicine, 22(4), 295 (2025). PMCID: PMC12032836

-

Katabathina, V.S., et al. Foundation Models in Radiology: What, How, Why, and Why Not. Radiology (2024). DOI: 10.1148/radiol.240597

-

Khosravi, B., et al. Generating Synthetic Data for Medical Imaging. Radiology (2024). DOI: 10.1148/radiol.232471

-

Hayashi, N., et al. Evaluation of AI diagnostic systems for breast ultrasound: comparative analysis with radiologists and the effect of AI assistance. Japanese Journal of Radiology (2025). DOI: 10.1007/s11604-025-01809-2. PMCID: PMC12479558

Disclaimer: The image accompanying this article is for illustrative purposes only and does not depict actual experimental results, data, or biological mechanisms.

Get cancer research delivered to your inbox

The best new studies, explained without the jargon. One email per week.