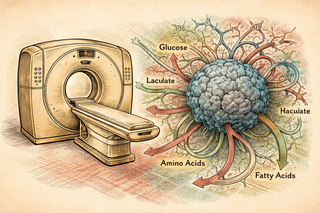

Most cancer scans tell you what the tumor looks like - size, shape, spread, whether it seems to be causing trouble like a tiny criminal with a real estate portfolio. Useful, yes. But looks are not the whole story. Tumors also have habits. They burn fuel differently, hoard nutrients, and rewire metabolism like they are trying to dodge both chemotherapy and your immune system's security team. Frankly, very rude behavior [3,4].

That is what makes this study different. The authors did not just throw more data into a blender and call it innovation. They built patient-specific metabolic models from transcriptomics data, then fused those models with 3D CT imaging data using multimodal deep learning [1]. Translation: they tried to connect what the tumor looks like with what it may actually be doing under the hood.

And in ovarian cancer, that matters. This disease is notorious for showing up late, spreading through the abdominal cavity, and behaving less like one tidy lump and more like a chaotic franchise operation. Even when two patients carry the same diagnosis, their tumors can run very different biological playbooks [3,5].

The tumor’s secret side hustle

Cancer metabolism is one of those fields that sounds niche until you realize it is basically the tumor’s finance department. If cancer cells want to grow fast, survive stress, and keep T cells locked outside like bouncers denied entry to a sketchy club, they need energy and raw materials. So they reprogram glucose use, lipid handling, amino acid uptake, and mitochondrial activity to keep the whole scam afloat [3,4].

This paper leans into that reality. Instead of treating metabolism as background noise, it makes metabolism part of the prediction engine. The model used imaging plus transcriptomics-derived metabolic features, and the authors report that this improved risk stratification while also pointing to specific metabolic reactions that may help explain why the model made the predictions it did [1].

That last part is a big deal. In cancer AI, one of the recurring headaches is the black-box problem. A model says "high risk," and everyone in the room nods politely while thinking, "Cool, but based on what exactly?" Reviews of multimodal cancer AI keep making the same point: performance is nice, but interpretability is what keeps a tool from becoming an expensive horoscope [2]. This study tries to do both.

Why this is actually interesting in the real world

If these findings hold up in larger and more diverse cohorts, this kind of approach could help move ovarian cancer care toward something more specific than broad category labels. Not just "ovarian cancer, high grade, bad actor," but "this tumor has imaging features and metabolic wiring linked to higher risk." That could eventually help with prognosis, monitoring, and maybe even treatment planning if the metabolic signals line up with drug vulnerabilities [1,5].

There is already momentum behind multimodal approaches in ovarian cancer. Other groups have shown that combining imaging with genomic or pathology data can improve risk stratification or predict chemotherapy response better than single-modality models alone [5,6]. So this paper is not arriving out of nowhere. It is more like the next heist sequel - except this time the crew added metabolism, which turns out to be the person in the van who actually knows the building layout.

The catch, because there is always a catch

Before we start acting like every CT scanner is about to become Sherlock Holmes with a biochemistry minor, a few reality checks matter.

Radiomics and multimodal AI in ovarian cancer still face very real problems with validation, reproducibility, and generalizability [2,6]. Many studies are retrospective. External validation can be sparse. Different scanners, imaging protocols, patient populations, and preprocessing choices can all nudge a model off course. Cancer biology is messy, and machine learning has a bad habit of looking brilliant right up until you show it a hospital it has never met before.

Also, this study improves survival estimation and risk stratification - it does not prove that acting on these predictions improves outcomes yet [1]. That is the difference between finding a sharper map and proving the map gets people safely home.

Still, it is a clever step. The study treats ovarian cancer less like a static object to be photographed and more like a living system with metabolic motives. As an immunology nerd, I have to respect that. Tumors do not just hide from the immune system in a fake mustache - they remodel the whole neighborhood, snack aggressively, and mess with the local chemistry while they are at it [4]. Any model that catches some of that behavior is playing a more serious game.

References

-

Eftekhari N, Verma S, Saha A, et al. Fusing imaging and metabolic modeling via multimodal deep learning in ovarian cancer. Cell Systems. 2026. DOI: https://doi.org/10.1016/j.cels.2026.101594 . PubMed: https://pubmed.ncbi.nlm.nih.gov/42025163/

-

Steyaert S, Pizurica M, Nagaraj D, et al. Multimodal data fusion for cancer biomarker discovery with deep learning. Nature Machine Intelligence. 2023;5(4):351-362. DOI: https://doi.org/10.1038/s42256-023-00633-5 . PMCID: https://pmc.ncbi.nlm.nih.gov/articles/PMC10484010/

-

Wang M, Zhang J, Wu Y. Tumor metabolism rewiring in epithelial ovarian cancer. Journal of Ovarian Research. 2023;16(1):108. DOI: https://doi.org/10.1186/s13048-023-01196-0 . PMCID: https://pmc.ncbi.nlm.nih.gov/articles/PMC10240809/

-

Tang PW, Frisbie L, Hempel N, Coffman L. Insights into the tumor-stromal-immune cell metabolism cross talk in ovarian cancer. American Journal of Physiology-Cell Physiology. 2023;325(3):C731-C749. DOI: https://doi.org/10.1152/ajpcell.00588.2022 . PubMed: https://pubmed.ncbi.nlm.nih.gov/37545409/

-

Crispin-Ortuzar M, Woitek R, Reinius MAV, et al. Integrated radiogenomics models predict response to neoadjuvant chemotherapy in high grade serous ovarian cancer. Nature Communications. 2023;14(1):6756. DOI: https://doi.org/10.1038/s41467-023-41820-7 . PMCID: https://pmc.ncbi.nlm.nih.gov/articles/PMC10598212/

-

Boehm KM, Aherne EA, Ellenson L, et al. Multimodal data integration using machine learning improves risk stratification of high-grade serous ovarian cancer. Nature Cancer. 2022;3(6):723-733. DOI: https://doi.org/10.1038/s43018-022-00388-9 . PMCID: https://pmc.ncbi.nlm.nih.gov/articles/PMC9239907/

Disclaimer: The image accompanying this article is for illustrative purposes only and does not depict actual experimental results, data, or biological mechanisms.